[1]:

%matplotlib inline

import numpy as np

from matplotlib import pyplot as plt

from pyspectools.models.classes import SpecConstants, MoleculeDetective

/home/kelvin/anaconda3/lib/python3.7/site-packages/pyspectools/fitting.py:13: TqdmExperimentalWarning: Using `tqdm.autonotebook.tqdm` in notebook mode. Use `tqdm.tqdm` instead to force console mode (e.g. in jupyter console)

from tqdm.autonotebook import tqdm

Identifying Molecules using Probabilistic Deep Learning¶

One of the difficulties with rotational spectroscopy is figuring out what the identity of a molecule is after we’ve obtained a set of spectroscopic parameters. For example, you’ve gone off to the lab and measured a bunch of lines, and now you’ve determined \(A, B, C\) as well as measured \(a-\) and \(b-\)type transitions, but now you don’t know what that molecule is! Unfortunately, it’s not such a trivial task to go from Hamiltonian to molecular structure: while the rotational constants are related to the moments of inertia, and therefore the distribution of mass in space, the transformation from structure to constants is non-invertible; we lose information/dimensonality with each subsequent transformation. The task that we’re dealing with is to try and re-map these constants back into some information that can help identify the unknown molecule. Because this mapping is increasing in dimensionality with each step, we have to somehow be able to account for all possible parameters that originate from a single set of constants: in other words, what kind of molecules can result from a given set of \(A, B, C\).

This question in itself can be framed in terms of Bayes’ theorem:

Starting with the left hand side, we have the posterior distribution, \(p(M \vert A, B, C)\), which represents the likelihood of any molecule \(M\), given a set of rotational constants. This is precisely what we’re interested in. \(p(A, B, C \vert M)\), describes the distribution of possible \(A, B, C\), given a molecule, while \(p(A, B, C)\) represents the prior distribution, which encapsulates any experimental uncertainty we may have. The final term, \(p(M)\), describes all possible molecule space: this is somewhat of an abstract concept, but the idea is that this term represents the identity of every possible molecule ever. In fact, you could rephrase the equation above to this:

where the denominator is now an integral of \(A, B, C\) for molecule \(m\) that is a subset of all possible molecules \(M\). This is not so tractable. Enter probabilistic deep learning! The idea is to parametrize the equation, such that a neural network—when trained properly—encapsulates molecule space in a highly compressed manner. In doing so, we’re replacing the abstract concept of \(M\) with the model parameters, \(\theta\) in our Bayesian neural network:

For a given training set of molecules—which we try to be as encompassing as possible—\(\theta\) will learn to approximate \(M\) as neural networks have a nifty part of them being universal function approximators.

The routines now implemented in PySpecTools provide the user a high-level interface for performing inference. There are currently two main classes that we’re concerned with, SpecConstants, and MoleculeDetective. This notebook will explore usage and provide insight into how these classes could be used in your own case.

SpecConstants¶

The SpecConstants class is your gateway into inference. The design behind this class is to facilitate the proper propagation of uncertainty, and to generate samples for inference. The backend machinery for this class is the uncertainties package. Every parameter that you determine in a spectroscopic fit has some nominal uncertainty: when values are poorly constrained, the uncertainties are large and this must be accounted for by having correspondingly uninformative priors.

To illustrate the basic mechanics, the construction of a SpecConstants object requires at a minimum three rotational constants, \(A, B, C\), provided as strings. Here’s an example:

[2]:

unknown_molecule = SpecConstants(A="5702.472251(10)", B="5702.472251(52)", C="2721.312512(602)")

[3]:

# calling print(unknown_molecule) or just by itself will summarize the molecule

unknown_molecule

[3]:

{ 'A': 5702.472251+/-1e-05,

'B': 5702.472251+/-5.2e-05,

'C': 2721.312512+/-0.000602,

'__name__': 'LoyalIndolentViperSnake',

'delta': 8.462402523799186+/-4.109074024806016e-05,

'kappa': 1.0+/-3.552497270559369e-08,

'u_a': 0.0+/-3.0,

'u_b': 0.0+/-3.0,

'u_c': 0.0+/-3.0}

The SpecConstants class keeps track of the experimentally relevant parameters: the rotational constants, derived parameters \(\Delta\) (the inertial defect) and \(\kappa\) (the asymmetry parameter), and the dipole moments along each principle axis. The derived parameters are calculated automatically once you initialize the object, and you can see how every parameter has a +/- corresponding to its nominal uncertainty. One important thing to note is that the dipole moments are by

default all centered around zero with large uncertainties: we assume that you haven’t actually measured the dipole moment (not such an easy thing to do well). If you want to provide dipole moments, you will need to provide a uncertainties.ufloat value like so:

[4]:

from uncertainties import ufloat

unknown_molecule = SpecConstants(A="20340(100)", B="1702.472251(52)", C="1321.312512(602)", u_a=ufloat(2., 1.), u_b=ufloat(0., 0.), u_c=ufloat(0., 0.))

In this case, I’m confident that there is an \(a-\)type spectrum, but I don’t know what the magntiude of the dipole moment is and so I set a nominal value and a large uncertainty to it. I did not observe any transitions along \(b\) and \(c-\)axes, and so I’m turning them off by setting them to zero with no uncertainty. Also, because only \(a\)-type transitions are observed, the \(A\) constant is only determined to $:nbsphinx-math:pm`$100 MHz. *Everything has

uncertainty, and this framework will take it all into account!*. The uncertainties are used by the class to generate samples, which you can call by running ``SpecConstants.generate_samples(N)`, or simply SpecConstants(N):

[5]:

# Generate 50 samples based on the experimental data

samples = unknown_molecule(10)

[6]:

samples

[6]:

array([[ 2.02753602e+04, 1.70247220e+03, 1.32131320e+03,

-9.59780726e-01, 6.07065213e+01, 2.00181407e+00,

0.00000000e+00, 0.00000000e+00],

[ 2.03497067e+04, 1.70247221e+03, 1.32131159e+03,

-9.59937702e-01, 6.07980516e+01, 1.34500523e+00,

0.00000000e+00, 0.00000000e+00],

[ 2.02079049e+04, 1.70247224e+03, 1.32131305e+03,

-9.59637060e-01, 6.06233669e+01, 1.48251780e+00,

0.00000000e+00, 0.00000000e+00],

[ 2.03602970e+04, 1.70247220e+03, 1.32131207e+03,

-9.59960037e-01, 6.08108304e+01, 4.55151766e-01,

0.00000000e+00, 0.00000000e+00],

[ 2.05556346e+04, 1.70247227e+03, 1.32131267e+03,

-9.60366724e-01, 6.10465461e+01, 2.08265664e+00,

0.00000000e+00, 0.00000000e+00],

[ 2.03267296e+04, 1.70247227e+03, 1.32131283e+03,

-9.59889389e-01, 6.07696309e+01, 2.43033941e+00,

0.00000000e+00, 0.00000000e+00],

[ 2.02419227e+04, 1.70247227e+03, 1.32131300e+03,

-9.59709621e-01, 6.06654158e+01, 1.55213573e+00,

0.00000000e+00, 0.00000000e+00],

[ 2.03497633e+04, 1.70247216e+03, 1.32131375e+03,

-9.59938048e-01, 6.07974889e+01, 1.13604348e+00,

0.00000000e+00, 0.00000000e+00],

[ 2.03583602e+04, 1.70247227e+03, 1.32131267e+03,

-9.59956017e-01, 6.08083075e+01, 4.01200724e+00,

0.00000000e+00, 0.00000000e+00],

[ 2.03989352e+04, 1.70247228e+03, 1.32131251e+03,

-9.60041167e-01, 6.08577313e+01, 1.20541280e+00,

0.00000000e+00, 0.00000000e+00]])

This function returns an array where each row corresponds to “an observation”, generated by sampling independent Gaussians with centers/widths based on the parameter value and uncertainty. The last three columns of each row are the dipole moments, and contrasted with our “well-determined” rotational constants, are actually all over the shop, and correspond with the fact that we have no clue what the dipole moments are, but we measured our experimental constants precisely. The same thing could be done for the rotational constants: if a parameter is poorly determined, just ascribe a large uncertainty to it.

MoleculeDetective¶

Now comes the cool bit: doing the actual inference using our pre-trained deep learning model. This is handled by the MoleculeDetective class, which can be instantiated just like so:

[7]:

model = MoleculeDetective()

The main purpose of this class is to handle running the model in the background without requiring you, the user, to know how to write/read code in PyTorch. The only inputs that you need are a SpecConstants object, and the expected composition of the molecule. The latter needs a bit more clarification: because molecule space is so deeply entangled, the model was developed to perform conditional inference: i.e. what are the identifying features, given this set of rotational constants and if

it contains oxygen, for example. The composition argument lets you choose a specific composition by providing an integer:

Integer |

Composition |

|---|---|

0 |

Pure hydrocarbon |

1 |

Oxygen-bearing |

2 |

Nitrogen-bearing |

3 |

Oxygen/Nitrogen-bearing |

Alternatively, if you have no idea what the composition is, then the default will be random compositions. This is helpful if you just want to see what the range of possible formulae are. You can perform inference by calling run_inference, or by using the object like a function:

[8]:

# Run our unknown, using a pure hydrocarbon composition

results = model(unknown_molecule, composition=0, N=1000)

This returns a MoleculeResult object, which holds the prediction results and implements some convenience functions for analysis, namely analyze(). More will be implemented soon! The three (well, two that you’d normally be interested in) results are stored as attributes in the MoleculeResult object. These are just straight NumPy arrays, and you can use violin plots to visualize the distribution of whatever parameter you’re interested in:

[9]:

# Formula sampling

results.formulas;

# Functional groups

results.functional_groups;

Each row of these results corresponds to an “observation”—basically, what you asked the neural network to predict as the formula/functional group given the corresponding set of rotational constants and the composition. If you call results.analyze(), it returns a Plotly Figure object which provides some interactivity; this is still in development and I hope to make this more streamlined soon. The thing that the function returns is a dictionary containing summary statistics of the run. We

can also use matplotlib to make violin plots to visualize the results:

[10]:

fig, ax = plt.subplots()

# Take the transpose of the array; matplotlib expects observations as columns...

ax.violinplot(results.formulas.T)

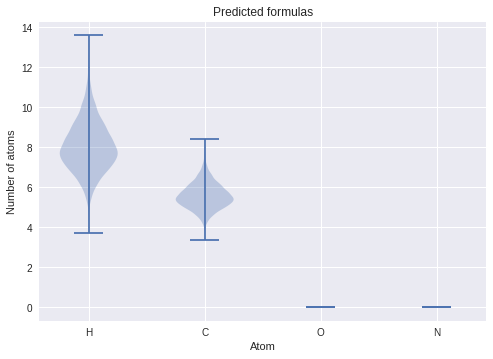

ax.set(title="Predicted formulas", xticks=[1, 2, 3, 4,], xticklabels=["H", "C", "O", "N"], ylabel="Number of atoms", xlabel="Atom");

The way you interpret these plots is that the width of the “violin” tells you the likelihood/probability density of the corresponding \(y\)-axis value. In this case, it suggests our molecule has the approximate formula \(\mathrm{H_8C_{5/6}}\). Notice that the number of hydrogens is the most uncertain—because hydrogen is so light, it doesn’t contribute significantly to the determination of the spectroscopic constants, and is appropriately reflected in our prediction!

Say if we found out that the molecule contained oxygen, we can then set composition=1 in our inference call:

[11]:

# Run our unknown, using a pure hydrocarbon composition

results = model(unknown_molecule, composition=1, N=1000)

[12]:

fig, ax = plt.subplots()

# Take the transpose of the array; matplotlib expects observations as columns...

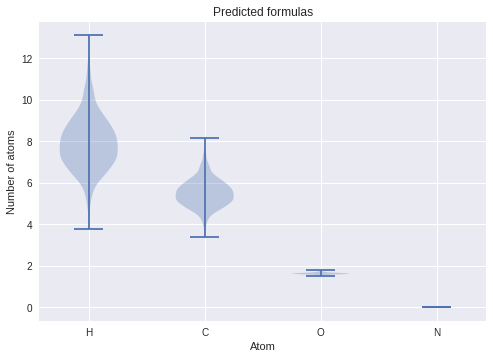

ax.violinplot(results.formulas.T)

ax.set(title="Predicted formulas", xticks=[1, 2, 3, 4,], xticklabels=["H", "C", "O", "N"], ylabel="Number of atoms", xlabel="Atom");

You’ll notice that now oxygen is predicted with some likelihood. One thing to keep in mind, however, that these results tend to be overconfident: the current state-of-the-art Bayesian neural networks tend to underestimate its uncertainty, as we’re only approximating the true posterior. The significant improvement to this version of the model compared to the one described in our paper is that only one set of weights are used for every composition: the same model is used to predict each composition. The idea behind this is to try and capture two separate aspects of the problem: (1) the distribution of mass is a physical property, and does not “change” persay between different compositions, and (2) compositions impose structural differences, for example \(sp^3\) nitrogen (e.g. amines) bond significantly differently from \(sp\) (e.g. nitrile) groups. In other words, the model was fine-tuned to strike a balance between accuracy and generalizability: we want it to be sufficiently accurate to make reliable predictions, but it should differ enough for vastly different compositions and constants. There is no perfect model, or a free lunch!

Conclusions¶

…And that’s how you perform inference! The idea is that once you have a gauge of what the size of the molecule, and what the potential functional groups are you can kind of start piecing things together and come up with viable candidates for your unknown molecule. As we get more and more sophisticated models, the plan is to hopefully make this process increasingly streamlined, ultimately getting to the point of a model generating structures directly from your parameters.

For more details about how the models were trained—although the process was modified for PySpecTools usage—please refer to our latest paper on the matter. If you use these models or PySpectools in general for your work, please let me know!